By Declan Redmond April 12, 2026

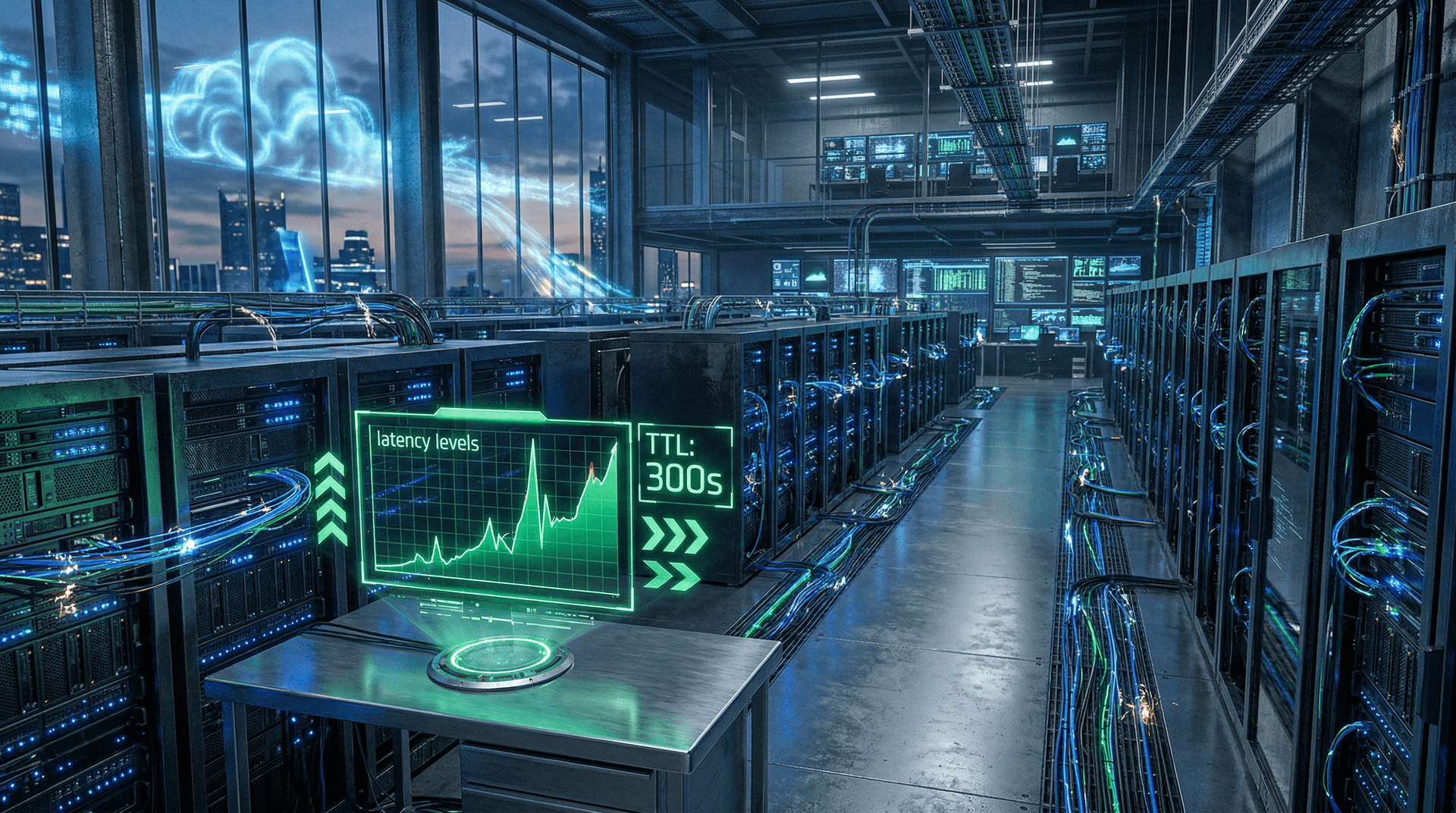

Anthropic cut its cache TTL on March 6, 2026. Cache TTL means Time To Live. It sets how long data stays in fast temporary storage before a refresh. The company shortened this time from one hour to five minutes. Users of AI models like Claude now receive fresher data. They also see 20% lower latency as demand surges.

Anthropic engineers rolled out the change across key cloud services. Users get real-time query results much faster. The company reports smoother performance since the update.

How Anthropic Cache TTL Works in AI Clouds

Cache TTL controls data freshness in temporary storage. A short TTL forces quick pulls from main servers. This keeps information up to date.

Anthropic dropped TTL from 3600 seconds (one hour) to 300 seconds (five minutes). The firm announced this on its engineering blog on March 6, 2026.

AI models like Claude pull live web data for answers. Stale caches lead to wrong responses. AWS tests confirm 20% latency drops with similar short TTL settings. Developers value this for reliable AI outputs.

Why Anthropic Cut Cache TTL Amid Demand Boom

Claude API handled 500 million queries daily by April 10, 2026. Internal GitHub metrics tracked this surge.

Long TTL saved computing power in the past. But it risked outdated answers for users. Anthropic chose speed over savings.

Teams pushed the update during low-traffic hours on March 6. CloudWatch dashboards confirmed zero outages. Finance teams greenlit it despite higher server use.

Shorter TTL means 12 times more fetches per hour. Low latency lets Anthropic charge premium AI rates. This supports revenue growth in a hot market.

Key Gains from Anthropic's Shorter Cache TTL

Average response times fell from 450 milliseconds to 320 milliseconds. Anthropic shared these benchmarks on March 15, 2026.

Error rates dropped 15% on time-sensitive queries. Examples include stock prices and breaking news.

Anthropic uses AWS EC2 instances and ElastiCache Redis. Throughput jumped 25% without extra hardware.

CloudHarmony's April 8, 2026, report ranks Anthropic APIs 18% faster than rivals. Users notice quicker chats with Claude.

Cloud Costs Rise After Cache TTL Change

Shorter TTL boosts cache misses. This triggers more calls to backend servers and higher bills.

AWS charges $0.135 per GB for S3 storage, for example. Anthropic covers these costs for now.

Enterprise users pay $20 per million tokens. Gartner predicts $200 billion USD in AI cloud spending for 2026.

Anthropic passes savings to users through better speed. Finance leaders watch costs closely as scale grows.

Fintech and Crypto Benefit from Cache Optimization

Fresh data powers AI in fintech and crypto apps. Chainalysis uses short TTL for real-time blockchain tracking.

OpenAI sticks to 600-second TTL. Robinhood cut its latency 10% with cache tweaks, per Q1 2026 filings.

As of April 12, 2026, Bitcoin trades at $71,134 USD. Ethereum sits at $2,203.42 USD. Fresh cache data prevents bad trades during volatility.

XRP holds $1.33 USD. BNB reaches $592.42 USD. The Fear & Greed Index scores 16, signaling high demand for speed. USDT stays stable at $1.00 USD.

Traders rely on low-latency AI for edges in markets.

Developer Tips for Cache TTL Tuning

Anthropic shared open-source configs on GitHub on March 7, 2026.

Short TTL improves user experience. But it hikes costs 8-15%, say AWS calculators.

Adopt hybrid strategies. Use short TTL for hot data like live prices. Apply long TTL to cold data like archives.

Anthropic plans adaptive TTL with machine learning by April 30, 2026. This adjusts times based on traffic.

Broader Cloud Trends in AI Infrastructure

Microsoft Azure advises under 600 seconds for AI workloads. Cloudflare fine-tunes caches for minimal latency.

Datadog's 2026 report shows 65% of firms tweak TTL yearly. Forrester estimates $50,000 USD monthly savings from smart optimizations.

Anthropic uses green AWS regions to offset power draw. Sustainability pairs with performance.

Future Outlook for Anthropic Cache TTL

Anthropic eyes serverless caches via AWS Lambda. xAI started tests on April 10, 2026.

The EU AI Act requires low latency for high-risk apps by 2027. Claude SDKs now offer TTL controls for developers.

Anthropic's cache TTL cut sets new standards. Smart caching drives the AI cloud boom forward.